What makes code bad or hard to understand? Often, it’s poorly-managed complexity.

For reasons we can’t quite explain, complexity is always worse in someone else’s code, and it never seems like a big deal when we write complex functions ourselves.

Why is that? We may never know.

Jokes aside, this topic is an interesting one because understanding it helps us understand how to make code readable and maintainable (as well as hopefully containing fewer bugs).

“Any fool can write code that a computer can understand.

Good programmers write code that humans can understand.”

-Martin Fowler

Let’s explore the concept visually to see if we can get an intuitive feel for it, and perhaps develop some interesting or useful patterns. Because this turns out to be one of those things that anybody can spot, if you’ve got the right picture.

Cyclomatic complexity illustrated#

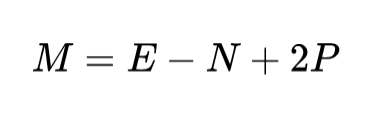

“Complexity” is an overloaded term with different numerical measures, but the most commonly used metric is “cyclomatic complexity” (aka McCabe’s cyclomatic complexity).

Cyclomatic complexity might sound complicated, but it’s really pretty straightforward (definition below).

It’s most often used when talking about a single function or procedure, like we will do in this post.

When measuring just a function by itself, P=1, so the result is just the number of edges minus the number of nodes, plus 2.

What this metric describes is how many linearly independent ways through the function, where “linearly independent” means that each way has at least one node that none of the others have.

Like in the code below, you can either go through the if or the else block, and these two different ways means it has a cyclomatic complexity of 2.

def isEven(n):

if (n % 2) == 0:

answer = 'even'

else:

answer = 'odd'

return answer

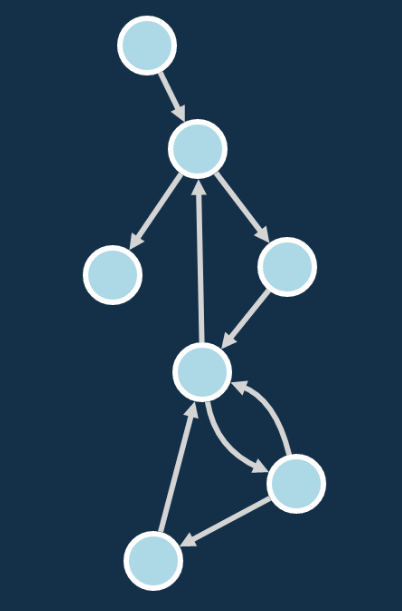

This isn’t always easy to see in a source listing, but its very apparent when you look at a function’s Control-Flow Graph (CFG), which shows all the way execution can flow in a function.

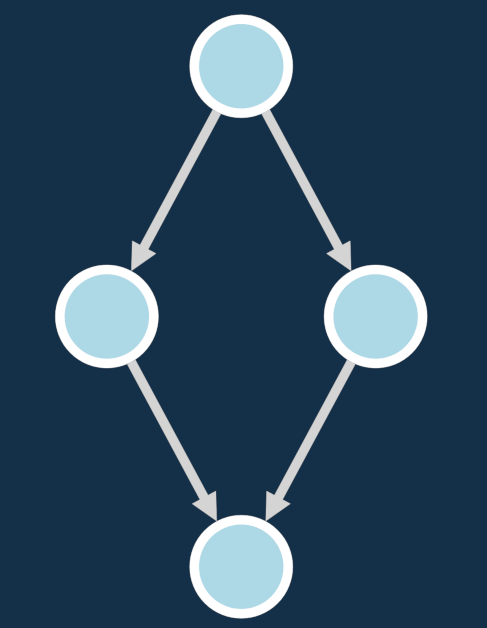

By taking the Python listing and transforming it into nodes with edges where the conditionals are, we get the CFG like the example below, which shows the two different paths.

The above version is annotated with the code, but when looking at larger graphs it’s easier to use simplified version like the one below that just shows nodes and edges.

A similar “isOdd” function for complex numbers adds another conditional, and has cyclomatic complexity that is one higher: 3.

def isOddComplex(n, i):

if (n % 2) == 0:

real = 'Real: even,'

else:

real = 'Real: odd,'

if (i % 2) == 0:

imaginary = 'Imaginary: even'

else:

imaginary = 'Imaginary: odd'

return real + imaginary

And while there are four unique “paths”, you can only pick up to 3 different ways through this graph if you define “different” to mean “each way contributes at least one new node that the others don’t have.”

Now let’s take a look at a slightly worse way to write this code. You might think it’s worse without being able to say why, but now we can name the reason: this version has a three total conditionals, giving it higher complexity (4 compared to the previous 3).

def nestedIsOddComplex(n, i):

if (n % 2) == 0:

if (i % 2) == 0:

answer = 'Real: even, Imaginary: even'

else:

answer = 'Real: even, Imaginary: odd'

else:

if (i % 2) == 0:

answer = 'Real: odd, Imaginary: even'

else:

answer = 'Real: odd, Imaginary: odd'

return answer

We can already see that conditional branches are what makes this measure go up, whether the conditionals are from ifs, for loops, or while loops.

And it makes sense: more conditionals lead to more cases to consider, hence increasing “complexity”.

Cyclomatic complexity tools for Python#

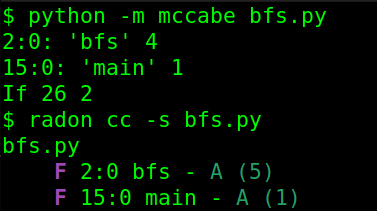

The most straightforward module to measure cyclomatic complexity in Python is to use the mccabe module which you can install via pip and run pretty easily: python -m mccabe test.py

You can also try out radon, which handles modules better and has some other complexity measures. radon is also pip-installable and easy to run, and you can filters for only high-complexity functions: radon cc -s -nD module/

We can run all of our tools on a familiar algorithm and see what the output looks like, starting with the venerable Breadth-first search (BFS):

def bfs(graph, node):

visited = [node]

queue = [node]

while queue:

m = queue.pop(0)

print(m, end=" ")

visited.append(m)

for neighbour in graph[m]:

if neighbour not in visited and neighbour not in queue:

queue.append(neighbour)

return

Now let’s try out the measurement tools on a little script that calls this function:

Complexity of 5 sounds right; two loops and two conditionals. Which we can see in the graphs, though the two conditionals ended up being grouped together in the same node (which they shouldn’t be for a CFG).

Yup, good ol’ BFS is complex enough to do something interesting, but not too much to get confusing.

Side note: if you want to make graphs like the ones in this post… I don’t recommend doing what I did, because there was a lot manual tweaking and I’m still not super pleased with how they turned out.

That being said, I used staticfg to parse Python code into CFG format. Its output is plain, which is what I wanted, but you might also check out py2cfg to see if you like its output better. The simplified versions of the CFGs were made using cytoscape.js and hierarchical layouts, which really helps for these kinds of graphs.

Why high complexity hurts your code (and your head)#

Taking a step back, why are we talking about CFGs so much here? A function’s CFG shows all the potential ways code can be executed in a function, so to truly understanding a function requires understanding the different possible behaviors.

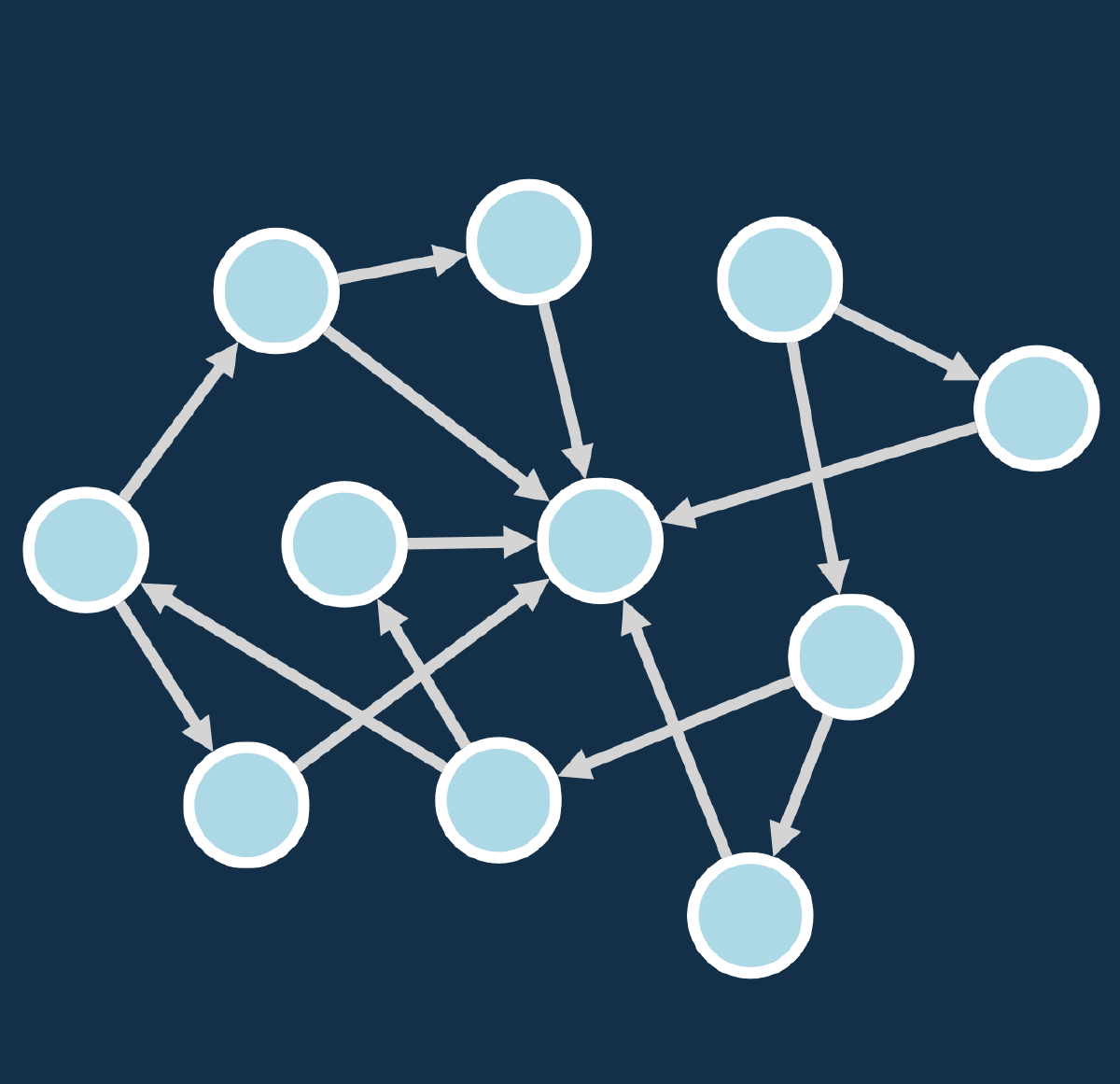

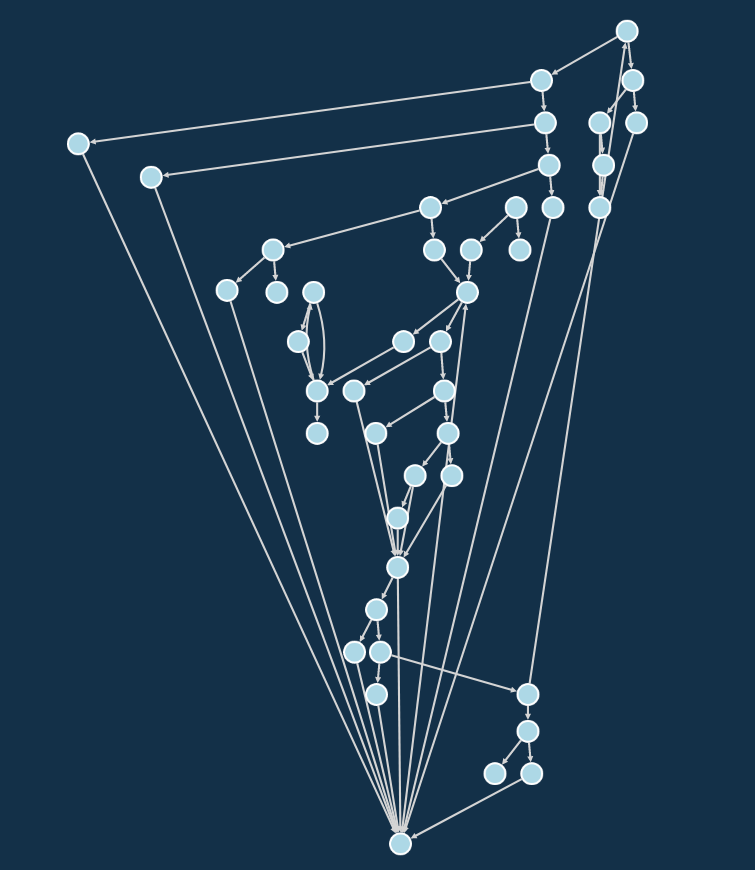

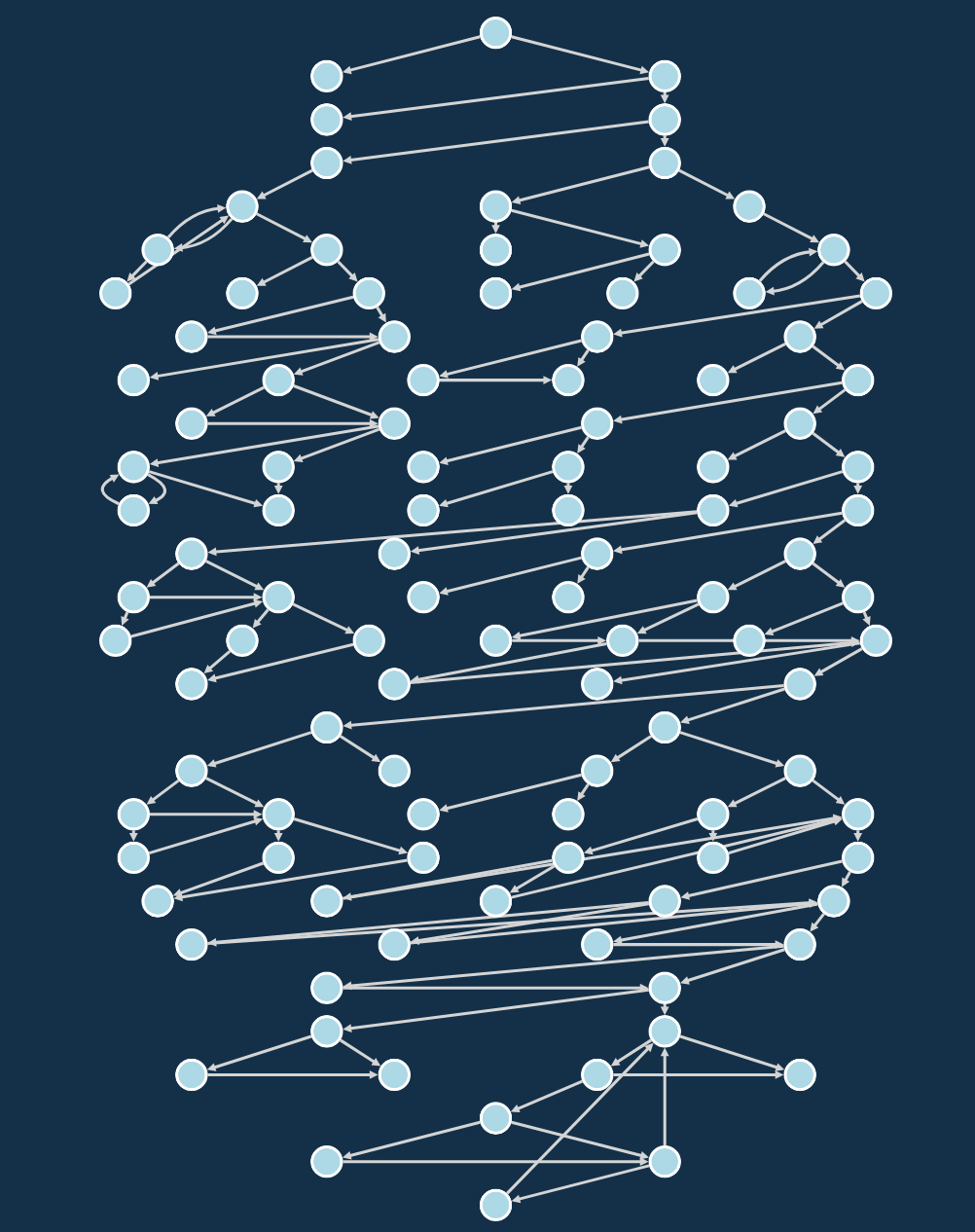

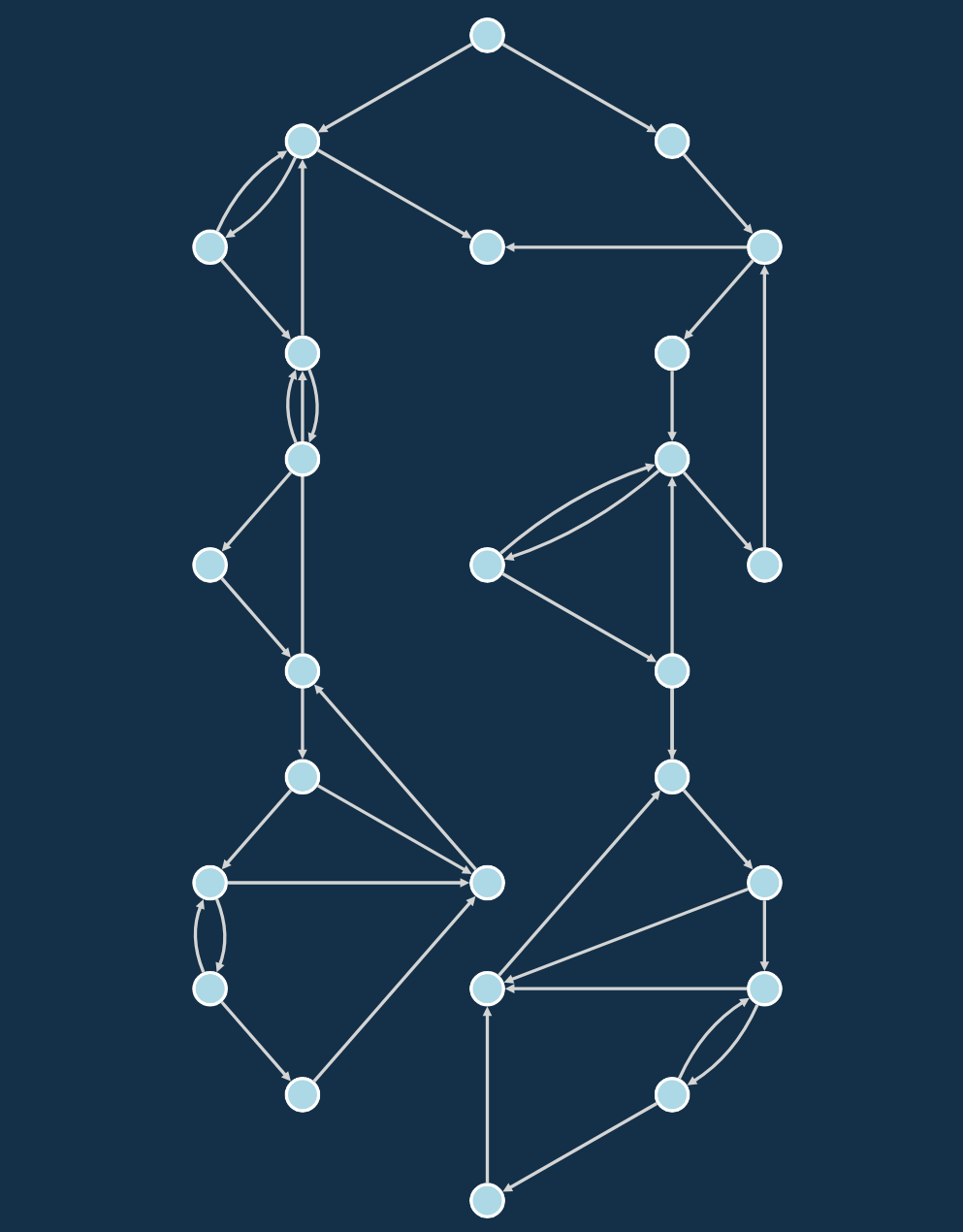

High cyclomatic complexity means there are a lot of different paths through a function, like in the example below, which has a cyclomatic complexity of 22. But 22 is just the number of “linearly independent paths”, which is pretty much the best-case scenario in terms of difficulty of understanding, because you’re not counting going through loops multiple times as “different.”

We’ll omit the long code listing and just focus on the graphs instead, remembering that in order to effectively work with this function you’d need to consider all of the paths here:

I don’t know about you, but this graph has a bit too much going on for my taste, even in the simplified version.

This is an example of “high” cyclomatic complexity, but it’s not the worst by any stretch… When assessing old Unix-style C programs, I’d regularly see scores over 100. So we can visually see a lot of different paths, but beyond CFG aesthetics, there are two serious issues that arise from this:

Lots of paths are hard to reason about#

Since there’s a limit to the number of potential behaviors we can hold in our heads, code that has too many paths is hard to reason about, which means it’s difficult to work with. You can get a rough idea of how bad complexity is just by looking at the CFG and gauging your visceral reaction to how hideous it is.

You might look at the graph of the complex code above and think “yeah, but the graph is harder to read than the actual code”, and you’re right…

Except that the linear format is a lie.

The gentle appearance of the code in your editor is a front; the true nature of that code is the beast of complexity you see in the graph, because that is the code’s actual structure. And that structure is what you have to wrestle with in order to understand, extend, or fix the code.

The bottom line is that if the CFG is ugly, it’s hard to think of everything that could happen. Which means that it takes more time to work with that code, whether you’re fixing, refactoring, or extending it.

But wait, there’s more! High complexity code hurts in ways other than just being cognitively taxing to read.

Lots of paths are hard to test#

Some of you may be thinking “hah, I could care less about test coverage!”. And maybe also “…and I don’t care if my lack of testing leads to bugs in production and the wailing and gnashing of teeth for my users!”.

Ok, I hope nobody says things like that. But if that’s you, I hope you enjoy the pictures anyway.

When you have code with high cyclomatic complexity, you’re probably not going to write enough unit tests for it. Why? Because the complexity score approximates the number of test cases you’d have to use in order to exhaustively test the paths (and that doesn’t even count things like different number of times through loops or data domain considerations!).

And this will shock you, but when I’ve audited codebases with functions that have complexity greater than 10, I usually find a lot less than 10 test cases for that function.

Causes of high complexity and what they look like#

I’ll share the naked truth with you: complexity is highly correlated with length of a function.

Functions that have high cyclomatic complexity are often ones that are really long, and vice versa. Generally the answer to managing complexity is breaking large functions into smaller ones (or classes), ideally in ways that make sense.

But here’s 3 specific causes of high complexity, along with illustrated examples.

Many sequential or deeply nested conditionals#

You’ll often see the first cause of high complexity in argument parsing or main functions, where there’s a lot of initial logic.

Often these functions started small but began to build up conditionals like so many barnacles, and before you know it your main function is big enough to be a romance novella.

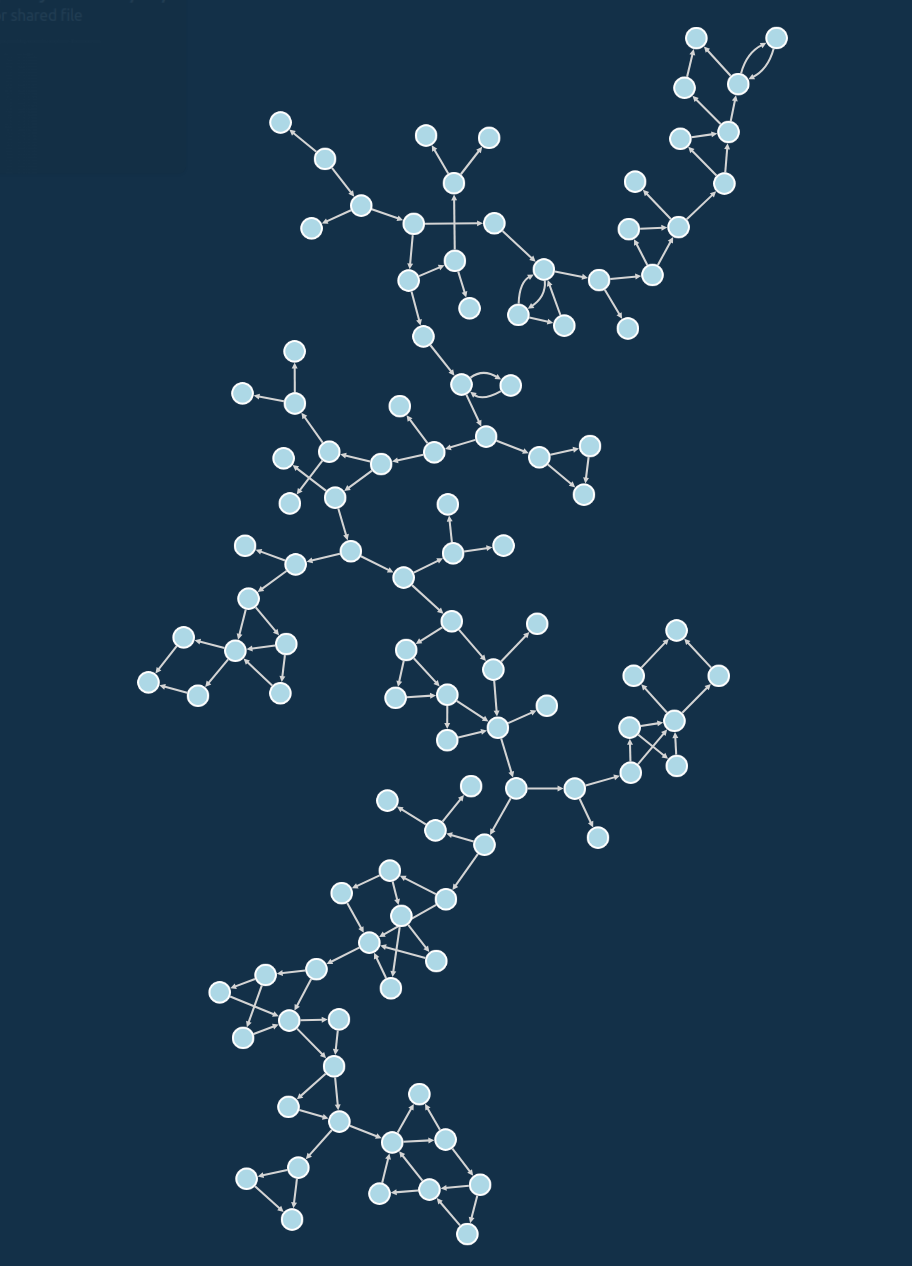

When graphed, these tend to have long trailing tree-like structures or will look very linear, depending on whether they tend to bail directly from the conditional.

The below function is an example of such a “main”-like function; omitting the code listing because it is several pages long, but suffice to say the complexity is high (92).

The simplified view shows that it doesn’t have hairball-like interconnectedness, but there’s just a lot of branches in this tree.

This example looks even better with a different layout that helps show that it’s just a long function with a lot of conditionals along the way.

Deeply nested conditionals are another common form of conditional stacking; the listing below has five levels of nesting and cyclomatic complexity 6.

def deep_nester():

if do_something():

if second_thing():

if third_thing():

if fourth_thing:

if fifth_thing():

print('top of the mountain!')

else:

print('fourth_thing() failed')

else:

print('third_thing() failed')

else:

print('second_thing() failed')

else:

print('do_something() failed')

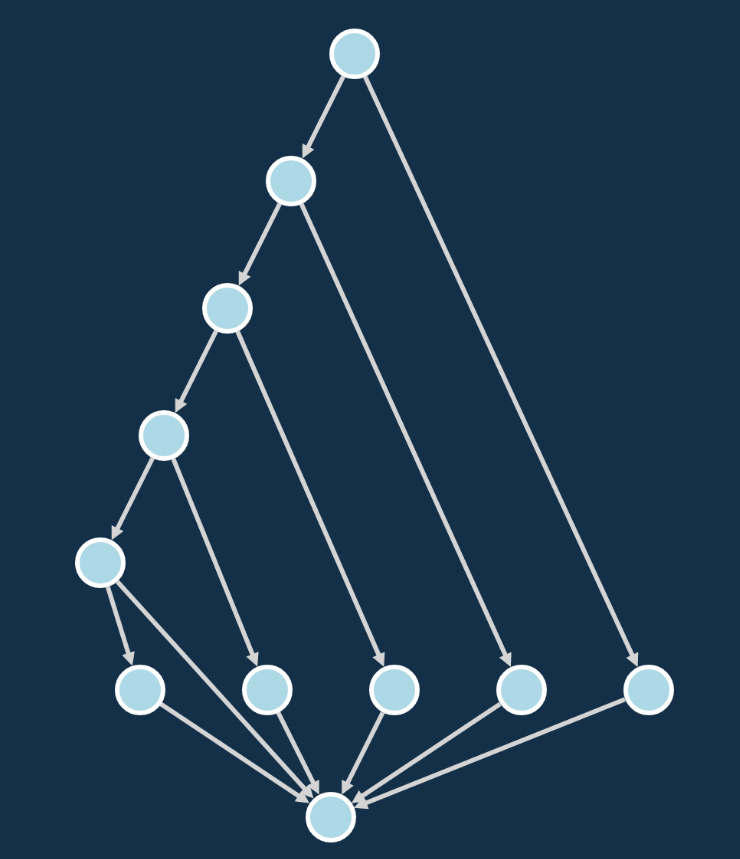

With the nicer nodes and edges of the simplified graph, we can see the stack of conditionals form a clump or diamond-like shape as they converge back to the return node.

Conditionals that have a large number of branch targets#

The second contributor of high complexity is conditionals with many branches (lots of elifs in Python, or switch statements in other languages), and it’s often required for functionality that has to handle many choices.

That being said, if you have a large switch construct, it helps to make the function contain as little as possible other than the switch itself.

A simplified example would be something that handles each day of the week, like this:

def dotw():

today = datetime.date.today()

w = today.weekday() + 1

if (w == 0):

print("Sunday 🌞")

elif (w == 1):

print("Monday... ")

elif (w == 2):

print("Tuesday 2️⃣")

elif (w == 3):

print("Hump day! 🐫")

elif (w == 4):

print("Thursday ⚡")

elif (w == 5):

print("TGIF! 🎉")

else:

print("It's the weekend 😎")

return

Which looks much like the deeply nested conditionals, arguably a bit cleaner despite its higher complexity score of 7, but that could just be due to node shapes and sizes:

Comparing the simplified versions, we see it is eerily similar. This suggests that switch constructs and deeply nested conditionals have a similar effect on a function’s structure and complexity:

If you’re trying to improve code like this, Python you can simplify it by using dictionaries to more concisely model switch statements. If we start with this example based on Flake8 rules that has complexity of 5:

def post_comment(self):

if self.success:

comment = 'Build succeeded'

elif self.warning:

comment = 'Build had issues'

elif self.failed:

comment = 'Build failed'

if self.success:

self.post(comment, type='success')

else:

self.post(comment, type='error')

By using a dictionary, we can write it like this:

def post_comment_improved(self):

comments = {

'success': 'Build succeeded',

'warning': 'Build had issues',

'failed': 'Build failed'

}

return self.post(comments[self.outcome], self.outcome)

And that yields a function with cyclomatic complexity of 1:

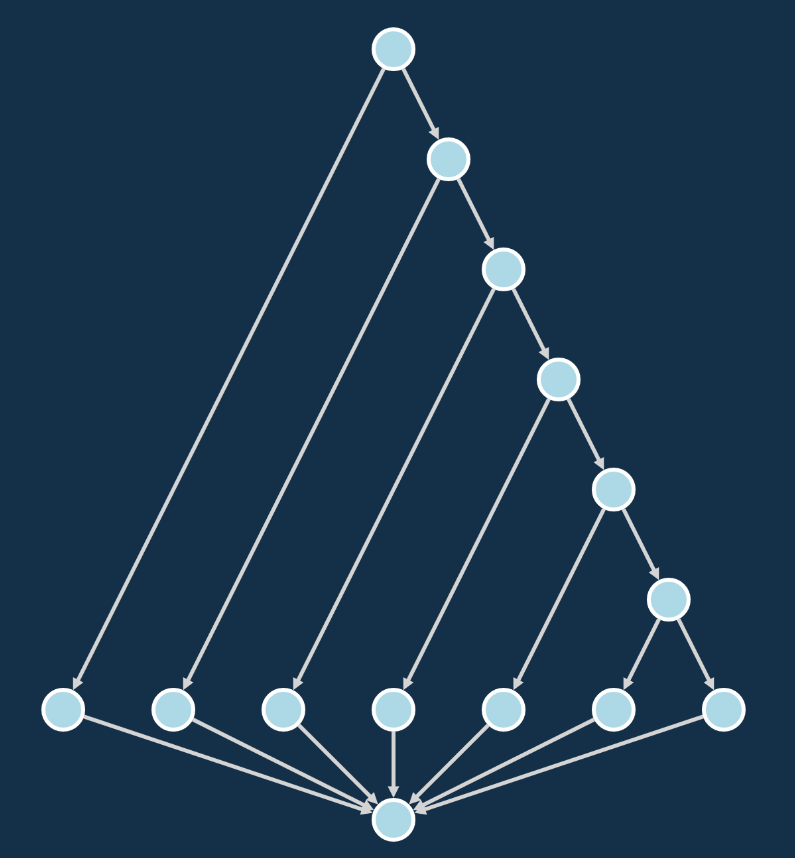

Large parallel paths#

The third and final cause of high complexity when you have parallel or unrelated paths in the same function.

You can usually spot this when you have an if/else construct where each has a really long body, or when you have several large conditional blocks in the same function (that could each be their own function).

If you were to graph these, you’d either see long parallel branches or a group of clumps that each re-converges (that should be their own functions), like this example with a complexity of 17.

This is one of those where the simplified CFG makes the structure of two largely parallel branches jump right out.

After examining each of these three causes, we can see how increased complexity looks in a CFG.

And the discussion so far has presumed we’re programming in a language that doesn’t have goto or an equivalent construct.

Direct branching via goto can either be used for good or as a particularly powerful tool for mangling CFGs and increasing complexity… but we’ll leave that discussion for another day.

How much complexity is too much?#

Numerical measures provide interesting guidelines and can help shepherd us toward best practices, but every situation is different.

The inventor of cyclomatic complexity originally recommended staying under 10 but more recently characterized it as a sliding scale of risk, with 20 being the beginning of “high” risk (whatever that means?). In any case, there’s no perfect one-size-fits-all, but many recommendations specify a limit somewhere between 10 or 20, or recommend balancing the need for lower complexity with other requirements.

One of the nice things about Python is that it forces whitespace on conditionals, so you can already see complexity just by the shape of a function’s indents. Since high complexity functions are often long, if you’re scrolling and scrolling still in the same function… it’s probably time to split up that beast.

Instead of worrying about a number, I think it’s better to ask “how hard is this to reason about, especially if I come back in a month and have to fix it?” Don’t give future-you a reason to hate present-you.

Simply put#

From the pictures and anecdotes, we now see the pattern: cyclomatic complexity is just a measure of how horrible a function’s CFG is going to be… which is an indication of how hard it fully understand the function, which leads to other issues.

This obviously isn’t the only kind of complexity in code, but it’s been a fun place to start!

If there’s any other types of complexity or visualizations of code metrics you commonly use, please let me know on Twitter. Otherwise just follow me there for more updates!